How Might We Enable Safe, Confident Navigation for Blind Individuals Through Wearable Tech?

Smart Assistance for the Blind

Every day, sighted people move through the world with an invisible safety net, able to see a curb before they trip or dodge an obstacle without a second thought. But for someone who is blind, that safety net does not exist. What we take for granted could mean danger, injury, or worse for them, all because they can’t see what’s coming.

Key Research Topics:

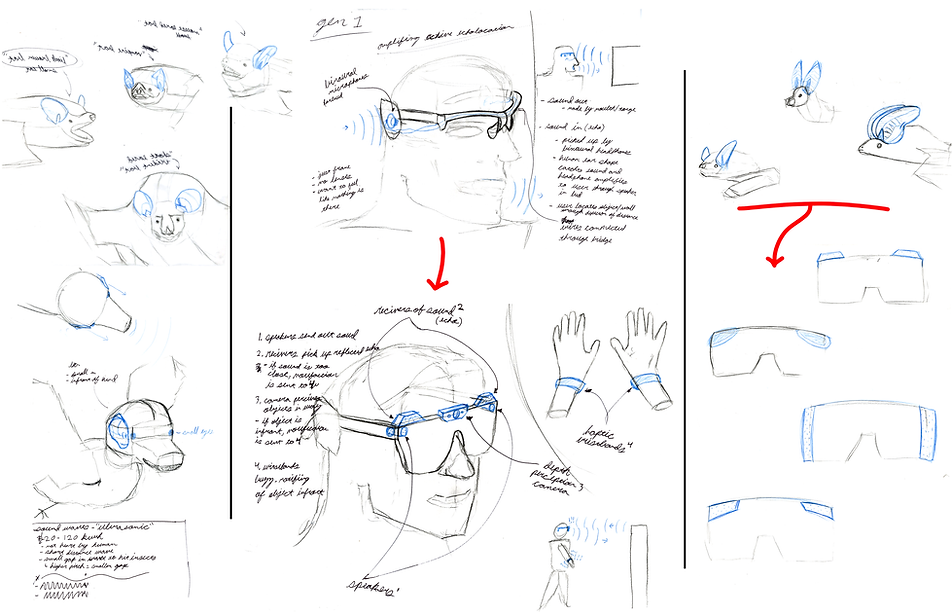

Sketching 1:

- Initial biomimicry study on echolocation and incorporating into wearable design

Finding First Form

Understanding the Problem Personally:

Before diving into the meat of this project, I wanted to understand what it was like to walk around dealing with blind struggles firsthand. I had the pleasure of connecting with Alaina, Abigail, and Crystal, who are all blind/ visually impaired. As I wanted to gain first-hand experience, they were more than happy to let me spend the day with them walking around their college campus. They led me to their frustration spots where they tend to bang their shins, sternum, or even drop off small ledges (all pictured). From here, I had a clear understanding of specific pain points that are not being addressed and need to be dealt with. I was also able to put 3D printed models of possible forms in their hands so they could feel and give me user feedback on which direction to pursue.

Sketching 2:

- Designing around conductive parts of the skull for haptic feedback

- Integrating arms to secure around head better

- Integrating placement of technological housing

Sketching in VR:

Prototyping:

13+ 3D printed prototypes were made to get to the current form and fit head ergonomics. A Working Prototype has been started but needs to be continued.

Result

I was recognized as a top-three student in my class and selected by Syracuse University to present my work in New York City to alumni and industry professionals.

I am beyond grateful for the opportunity to focus on improving accessibility for people who are blind through my work.

Technology in Action

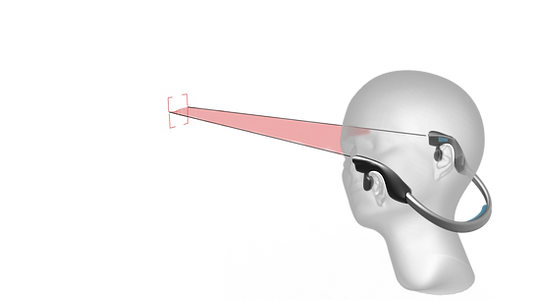

Object Detection

Incoming objects are picked up by 2 depth perception cameras placed on the sides of the head. This is possible through triangulation, as if we know the set distance inbetween the cameras and at what angle each camera sees the object, distance can be calculated.

Object Identification

Throughout research, I learned that not only do the blind bump into many things, but they also want to learn what it is they are bumping into. This could be possible though reverse engineering AI to instead spit out an image of _____ when asked, spit out a description of an object when scanned through the camera.

Haptic Feedback

Once an object is picked up by cameras, the system can then notify / guide the user out of harms way. the two best way to do this would be through haptic feedback (vibration), or audable feedback (bone conducting headphones

Text / Signage Assist

Similar to object identification, I learned that the blind need a more efficient way to learn about their surroundings than through Braille. Braille signs are often hard to locate and become unsanitary when it comes to places such as the bathroom. With AI also acting as a reader, the blind can get audible notes on things such as food labels, directions in airports, and floor numbers.

Modular lens for preferred wear

(magnetic attachment)